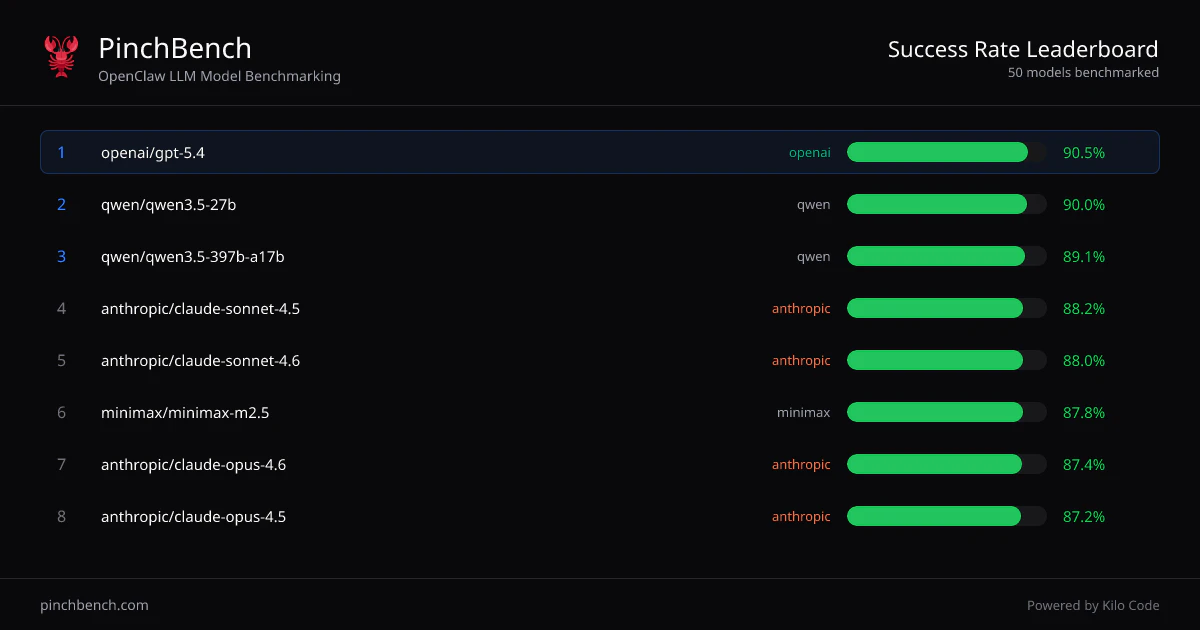

PinchBench

Find the best AI model for your OpenClaw

About PinchBench

PinchBench is a specialized benchmarking platform designed for evaluating large language models (LLMs) as OpenClaw coding agents. Developed by Kilo Code, the creators of KiloClaw, it enables developers to compare different models on a standardized set of real-world coding tasks. By measuring success rate, execution speed, and cost, PinchBench helps users identify the most efficient and cost-effective model for their specific development needs. Its focus on practical performance metrics makes it a valuable tool for teams integrating LLMs into their workflows, especially those seeking optimal performance-to-cost ratios. The platform's emphasis on real-world testing ensures that users can make informed decisions based on how models perform in actual coding scenarios, rather than relying solely on theoretical benchmarks.

Screenshots

Pros

- ✓Provides comprehensive performance metrics including success rate, speed, and cost

- ✓Standardized benchmarking across multiple LLM models for fair comparison

- ✓Designed specifically for OpenClaw coding agents, enhancing relevance for developer workflows

- ✓Made by Kilo Code, known for quality developer-focused tools

- ✓User-friendly interface for easy comparison and analysis

Cons

- ✗Focused primarily on OpenClaw models, limiting broader applicability

- ✗Details on pricing and integrations are not explicitly provided

- ✗May require technical expertise to interpret benchmarking results effectively

Use Cases

Pricing

Likely operates on a freemium model with free access to basic benchmarking features and paid plans offering additional analytics or higher usage limits, though specific details are not publicly confirmed.

Quick Info

Topics

Alternatives

Similar Tools in AI Assistants

Embed Badge

Add this badge to your website to show that PinchBench is featured on Visalytica.

<a href="https://www.visalytica.com/tool/pinchbench" target="_blank" rel="noopener noreferrer" style="display:inline-flex;align-items:center;gap:6px;padding:6px 14px;background:#7c3aed;color:#fff;border-radius:8px;font-family:-apple-system,system-ui,sans-serif;font-size:13px;font-weight:600;text-decoration:none;transition:background .2s" onmouseover="this.style.background='#6d28d9'" onmouseout="this.style.background='#7c3aed'"><svg width="14" height="14" viewBox="0 0 24 24" fill="none" stroke="currentColor" stroke-width="2.5" stroke-linecap="round" stroke-linejoin="round"><path d="M12 20V10"/><path d="M18 20V4"/><path d="M6 20v-4"/></svg>Featured on Visalytica</a>