Ollama v0.19

Massive local model speedup on Apple Silicon with MLX

About Ollama v0.19

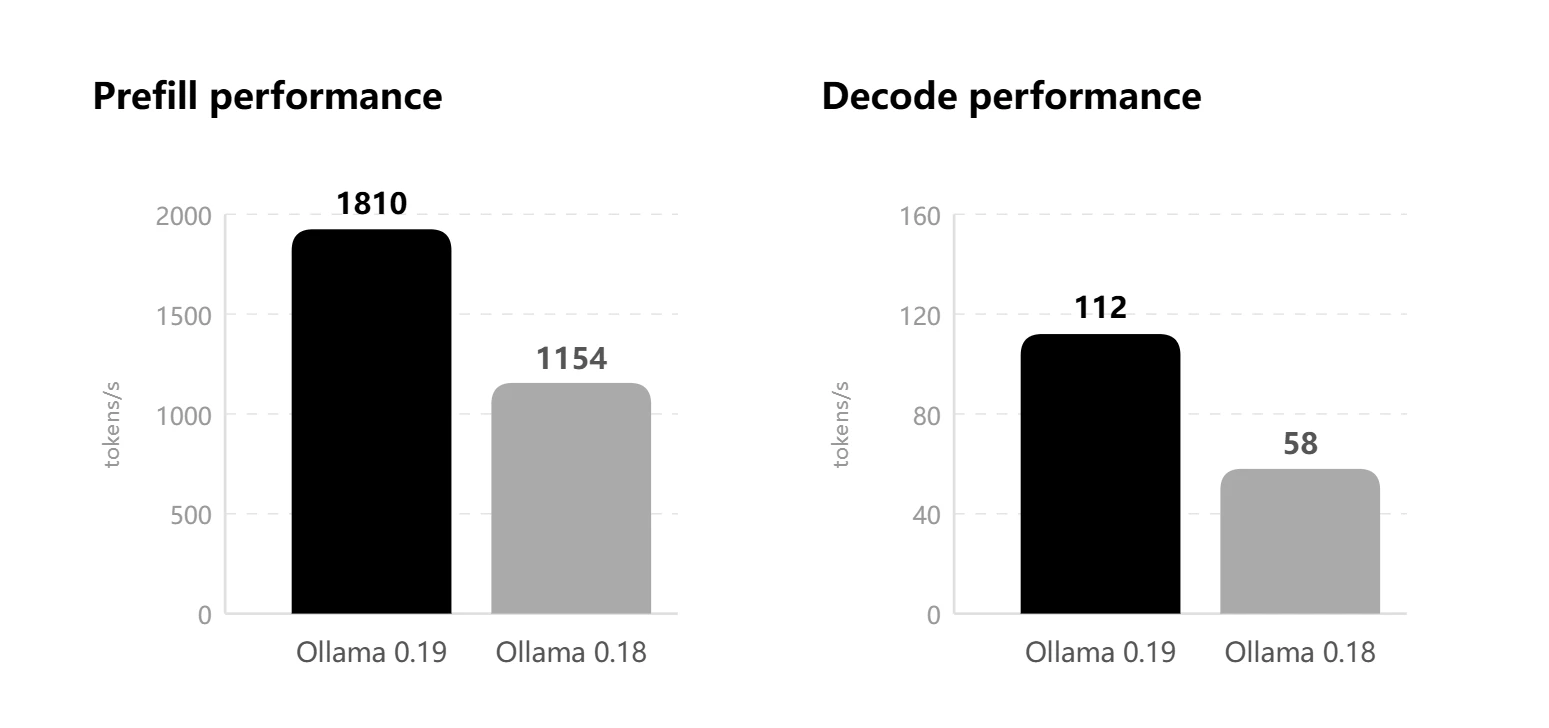

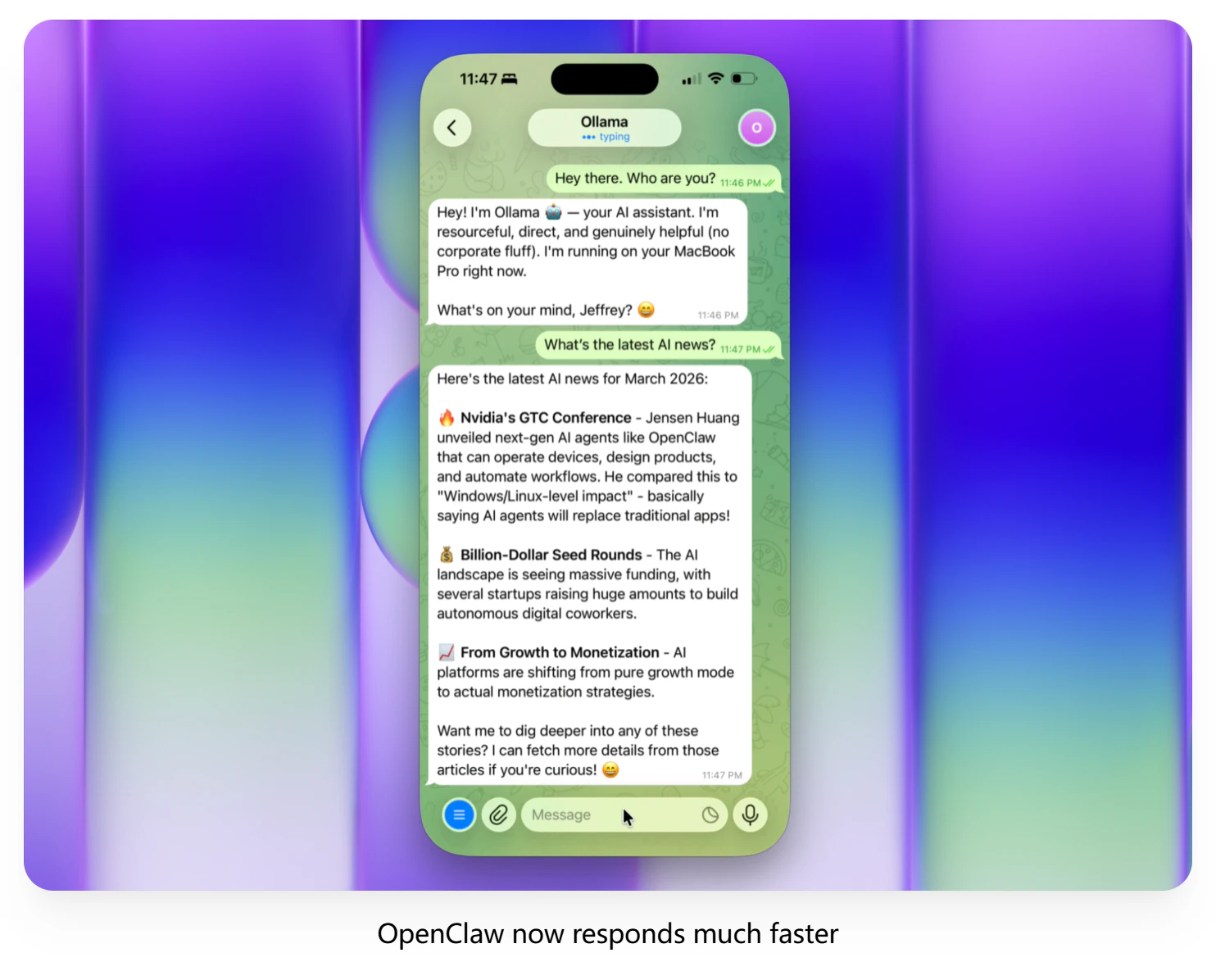

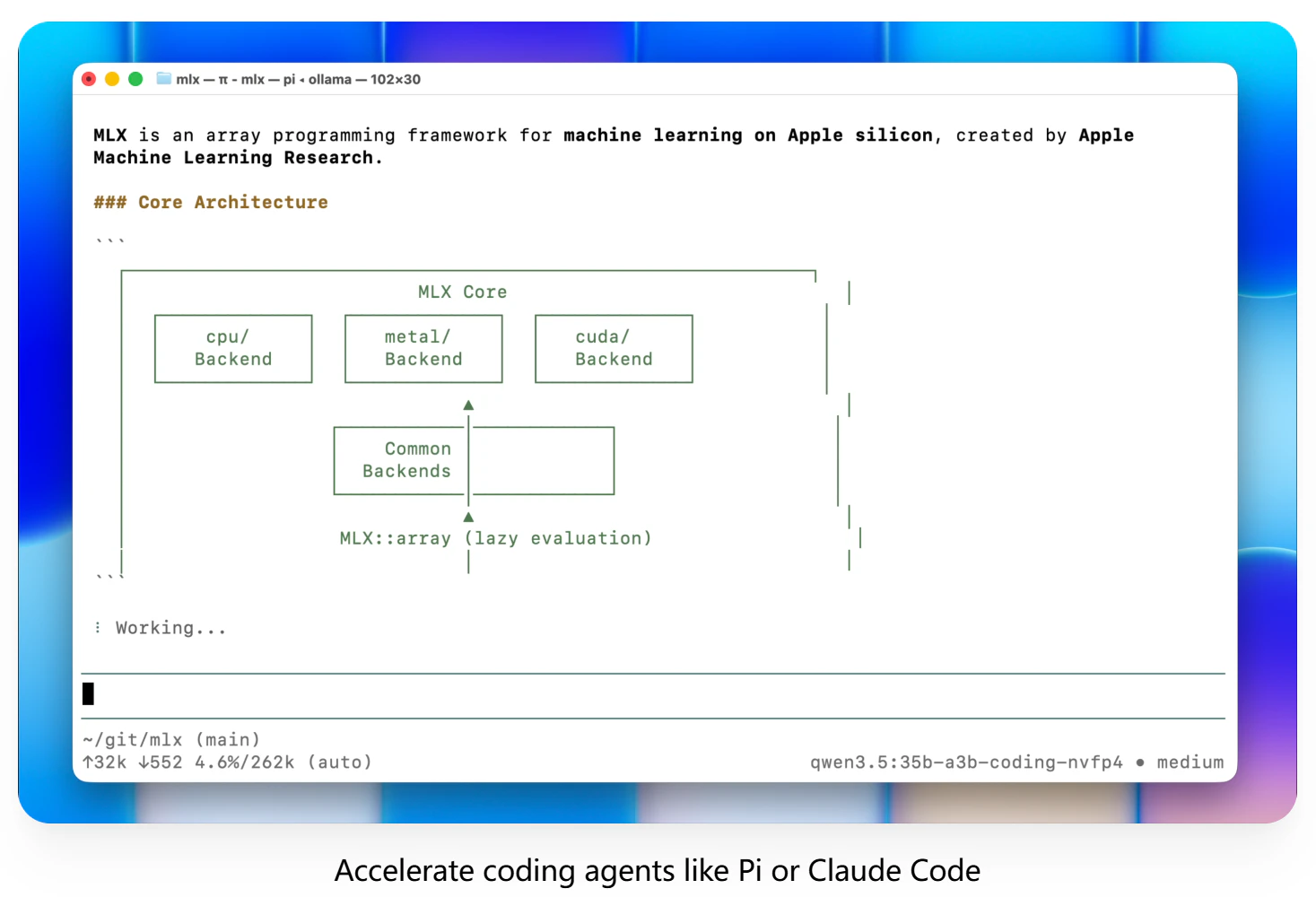

Ollama v0.19 is a powerful tool designed to optimize local AI model inference on Apple Silicon devices. By leveraging the MLX framework, it delivers significantly faster performance for coding, AI agents, and other workflows that rely on local models. This update introduces NVFP4 support, smarter cache reuse, snapshots, and eviction mechanisms, making sessions more responsive and efficient. Ideal for developers, AI enthusiasts, and researchers using Apple hardware, Ollama offers a seamless way to run large models locally without sacrificing speed or efficiency. Its focus on maximizing the capabilities of Apple Silicon makes it stand out in the AI tools landscape, especially for those seeking high-performance local AI inference without relying on cloud services. With a vibrant community and a growing set of features, Ollama v0.19 is positioned as a leader in local AI deployment on Mac, enabling faster development and experimentation.

Screenshots

Pros

- ✓Significant speed improvements on Apple Silicon via MLX

- ✓Supports NVFP4 for enhanced model performance

- ✓Smarter cache reuse, snapshots, and eviction for better session management

- ✓Optimized for local AI inference, reducing dependency on the cloud

- ✓Strong community support with active development

Cons

- ✗Primarily optimized for Apple Silicon, limiting cross-platform use

- ✗May require technical expertise to fully leverage advanced features

- ✗Potentially limited integration with some cloud-based AI workflows

Use Cases

Pricing

Likely offered as a freemium or open-source tool, with core features available for free and potential premium options for advanced functionalities or enterprise use. Exact pricing details are not specified.

Quick Info

Topics

Alternatives

Similar Tools in AI Image & Design

Embed Badge

Add this badge to your website to show that Ollama v0.19 is featured on Visalytica.

<a href="https://www.visalytica.com/tool/ollama-v0-19" target="_blank" rel="noopener noreferrer" style="display:inline-flex;align-items:center;gap:6px;padding:6px 14px;background:#7c3aed;color:#fff;border-radius:8px;font-family:-apple-system,system-ui,sans-serif;font-size:13px;font-weight:600;text-decoration:none;transition:background .2s" onmouseover="this.style.background='#6d28d9'" onmouseout="this.style.background='#7c3aed'"><svg width="14" height="14" viewBox="0 0 24 24" fill="none" stroke="currentColor" stroke-width="2.5" stroke-linecap="round" stroke-linejoin="round"><path d="M12 20V10"/><path d="M18 20V4"/><path d="M6 20v-4"/></svg>Featured on Visalytica</a>