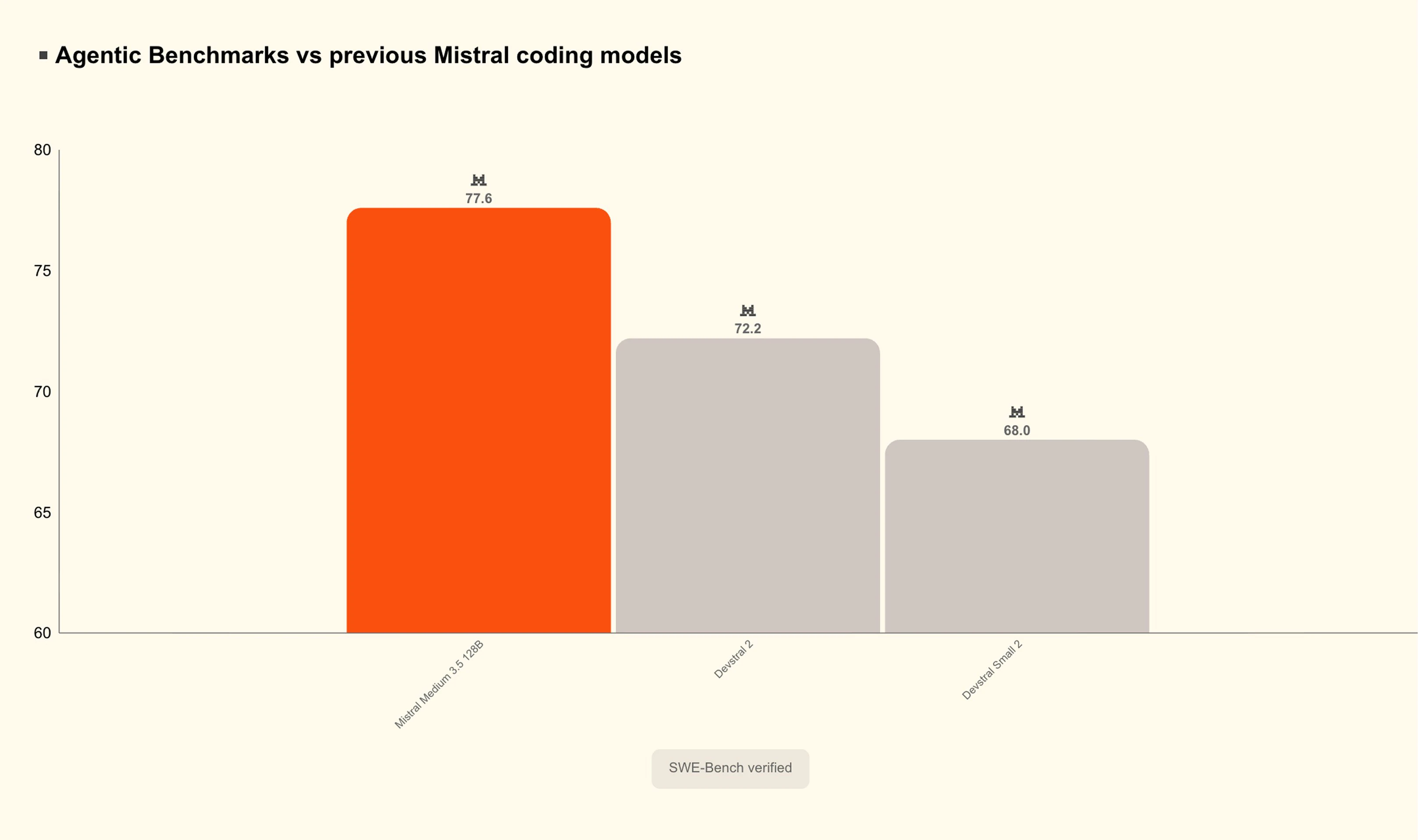

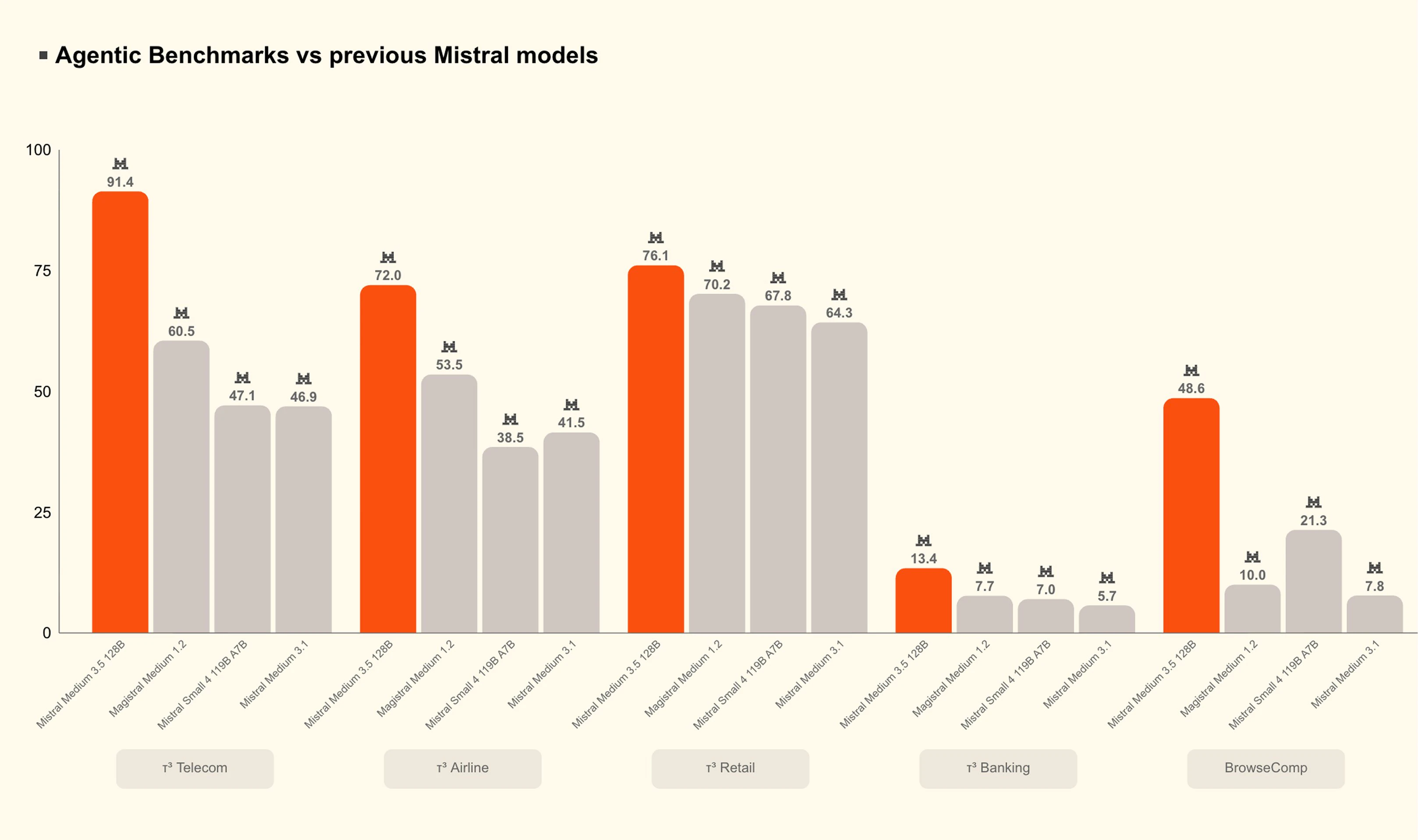

Mistral Medium 3.5

A 128B model for coding, reasoning, and long tasks

About Mistral Medium 3.5

Mistral Medium 3.5 is a cutting-edge AI model designed for versatile applications including coding, reasoning, and handling long tasks. With a dense 128-billion parameter architecture, it consolidates multiple AI functionalities into a single set of weights, making it a powerful tool for developers and enterprises seeking advanced language understanding and generation capabilities. Its 256k context window allows for processing extensive inputs, ideal for complex tasks that require deep reasoning or detailed code analysis. Open-sourced on HuggingFace, Mistral Medium 3.5 offers the flexibility for teams to host and customize the model internally, catering to organizations prioritizing data privacy and control. Whether used for building intelligent assistants, automating coding workflows, or supporting research projects, this model stands out for its blend of high performance and configurability, making it suitable for a broad range of AI-driven applications.

Screenshots

Pros

- ✓Large 128B parameter model with robust multi-task capabilities

- ✓Open weights enable self-hosting and customization

- ✓Extensive 256k context window supports long-form tasks

- ✓Unified model for coding, reasoning, and instruction-following

- ✓Suitable for enterprise use and privacy-conscious deployments

Cons

- ✗Requires significant computational resources for inference

- ✗Potentially steep learning curve for setup and optimization

- ✗Limited community support or user base at this stage

Use Cases

Pricing

Likely adopts a freemium or open-source model, with the core weights available on HuggingFace, allowing organizations to run inference on their own infrastructure. Additional costs may involve hardware and maintenance for self-hosting.

Quick Info

Topics

Alternatives

Similar Tools in Productivity

Embed Badge

Add this badge to your website to show that Mistral Medium 3.5 is featured on Visalytica.

<a href="https://www.visalytica.com/tool/mistral-medium-3-5" target="_blank" rel="noopener noreferrer" style="display:inline-flex;align-items:center;gap:6px;padding:6px 14px;background:#7c3aed;color:#fff;border-radius:8px;font-family:-apple-system,system-ui,sans-serif;font-size:13px;font-weight:600;text-decoration:none;transition:background .2s" onmouseover="this.style.background='#6d28d9'" onmouseout="this.style.background='#7c3aed'"><svg width="14" height="14" viewBox="0 0 24 24" fill="none" stroke="currentColor" stroke-width="2.5" stroke-linecap="round" stroke-linejoin="round"><path d="M12 20V10"/><path d="M18 20V4"/><path d="M6 20v-4"/></svg>Featured on Visalytica</a>